Vote by Sharing

Unite 100 000 Women in Tech to Drive Change with Purpose and Impact.

Do you want to see this session? Help increase the sharing count and the session visibility. Sessions with +10 votes will be available to career ticket holders.

Please note that it might take some time until your share & vote is reflected.

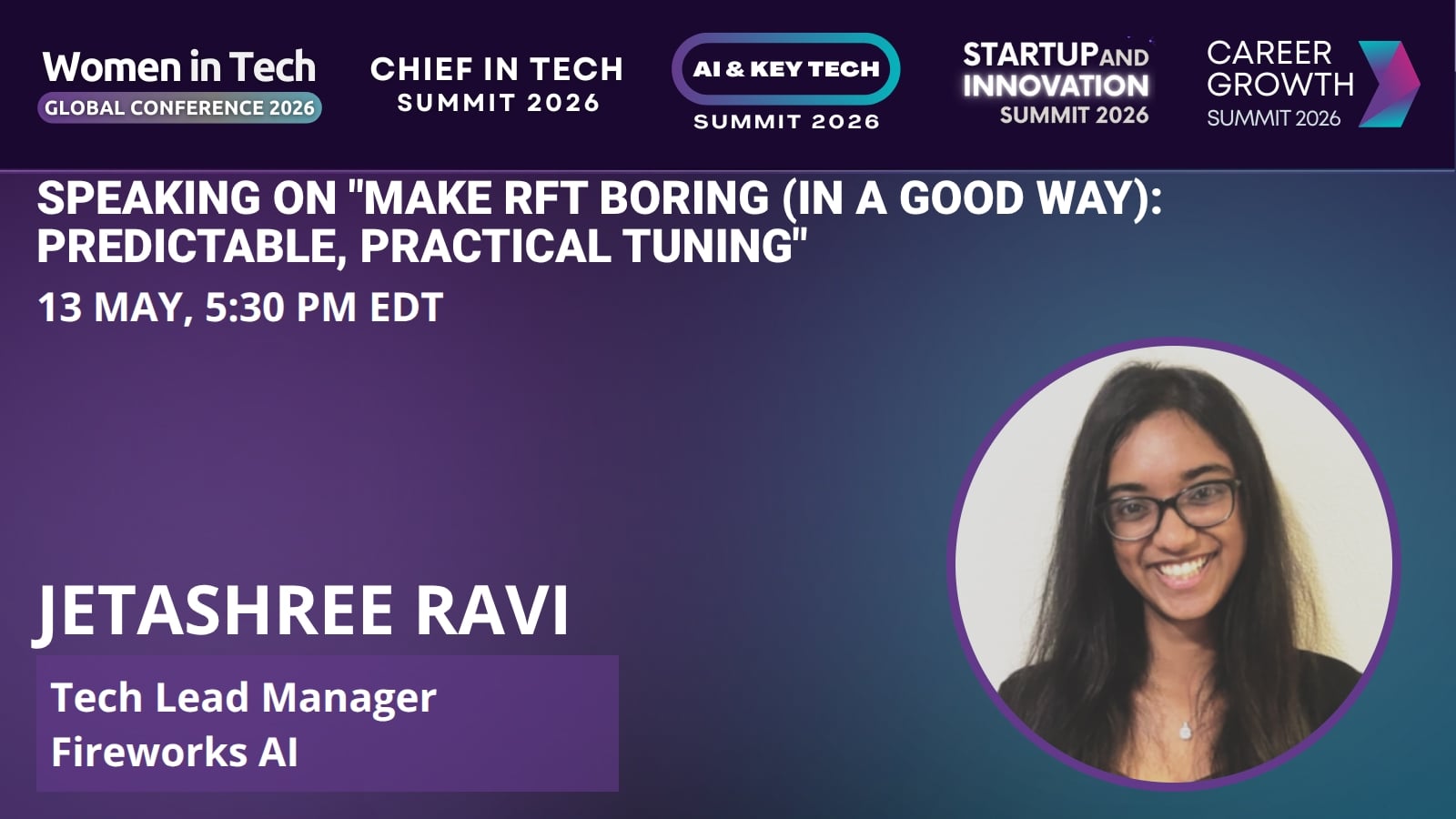

Session: Make RFT Boring (In a Good Way): Predictable, Practical Tuning

Reinforcement Fine-Tuning (RFT) can be a game-changer for improving model behavior-- but getting it to work well in practice isn’t always smooth. Iteration can feel slow, evaluators can be noisy, reward signals can get “creatively interpreted,” and building the right training data often takes more trial and error than expected.In this masterclass, we’ll share practical, production-tested recipes for making RFT faster, more reliable, and less painful. We’ll cover how to structure datasets that actually help, build evaluation loops you can trust, avoid reward hacking traps, and iterate quickly without sacrificing quality. We’ll also keep the session interactive with audience questions throughout, so we can ground the discussion in real challenges people are facing today.

Attendees will leave with clear frameworks, hard-earned lessons, and actionable next steps to bring back to their own RFT workflows.

Key Takeaways

- RFT succeeds when treated as an iterative system: well-structured datasets, reliable evaluation loops, and carefully designed reward signals are key to improving real-world model behavior.

Bio

Jetashree Ravi is a Tech Lead Manager at Fireworks AI, where she leads a team of applied machine learning engineers building production-grade AI systems for companies including Figma, Uber, Cognition, and Sentient Foundation. Her work focuses on large language models, high-performance GPU inference, and developer platforms that make enterprise AI reliable at scale. She is also a co-author of FIREBENCH and an active member of the AI builder community, where she organizes and judges hackathons.