Vote by Sharing

Unite 100 000 Women in Tech to Drive Change with Purpose and Impact.

Do you want to see this session? Help increase the sharing count and the session visibility. Sessions with +10 votes will be available to career ticket holders.

Please note that it might take some time until your share & vote is reflected.

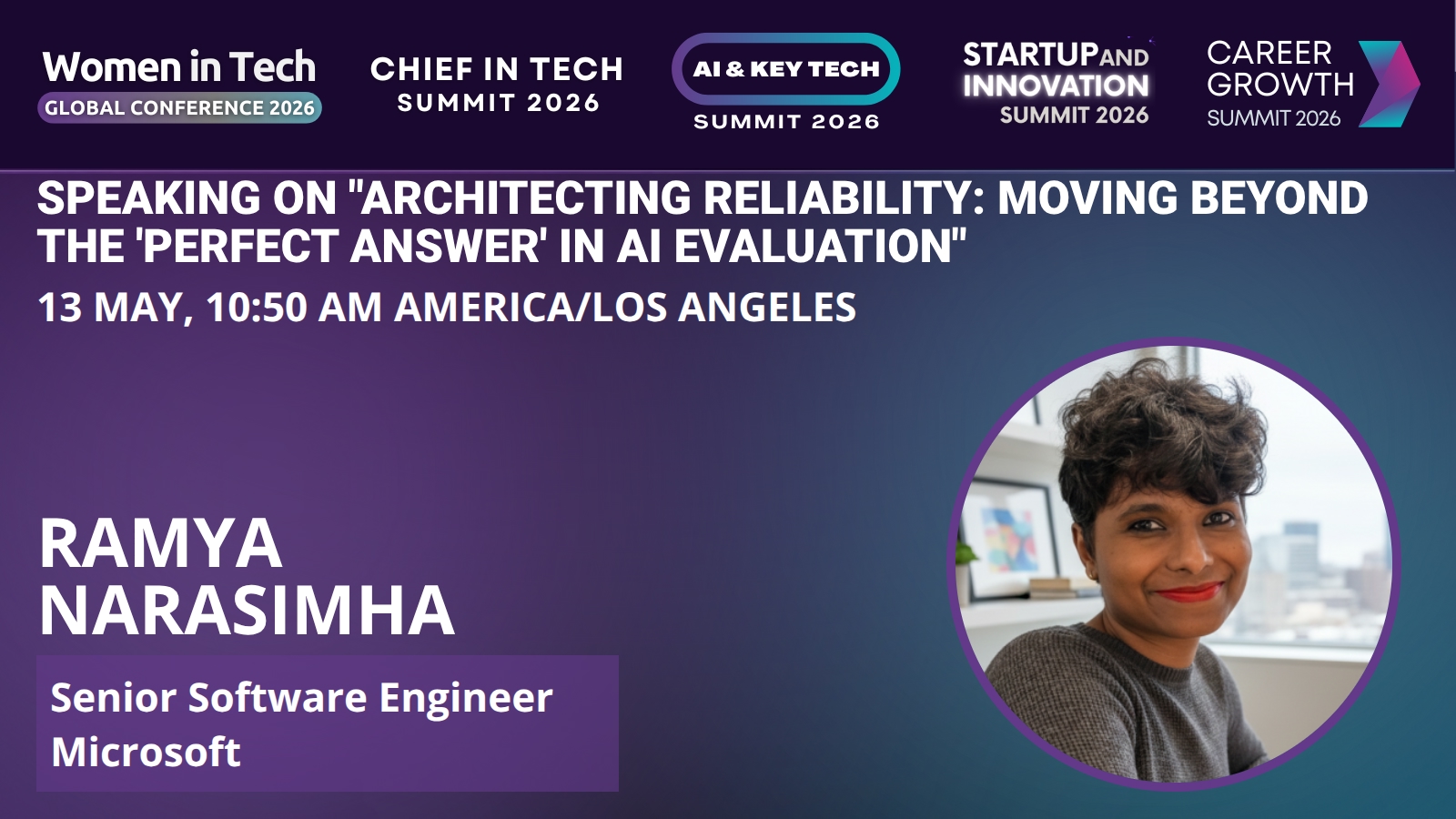

Session: Architecting Reliability: Moving Beyond the 'Perfect Answer' in AI Evaluation

In 2026, the challenge with AI isn’t just generating correct answers—it’s knowing when to trust them. Most AI evaluation today still centers on a narrow set of metrics: accuracy, latency, and fluency. While these are easy to measure, they say very little about how an AI system actually behaves when information is incomplete, signals conflict, or verification fails. This talk explores why current evaluators are poorly equipped to assess reasoning quality and user trust, and why evaluating internal chains of thought is neither stable nor sufficient. Instead, we’ll examine a shift toward evaluating what the system checked: which assumptions were verified, what evidence was available, and where uncertainty was explicitly surfaced. By focusing evaluation on transparency and verification—rather than polished outputs alone—we can design AI systems that users trust even when answers are imperfect. This session offers practical, engineering‑driven insights into how evaluation strategies shape user confidence and will define the next generation of trustworthy AI systems.Key Takeaways

- Users trust AI systems not because the answers are perfect, but because the system makes its verification and uncertainty visible.

Bio

Ramya Narasimha is a Senior Software Engineer at Microsoft, where she builds large‑scale AI‑powered systems focused on reliability, evaluation, and user trust. Her work centers on designing AI platforms that operate in complex, real‑world environments—where correctness alone isn’t enough and transparency becomes essential. With a background in large scale cloud diagnostics, and integrating AI into the workflow, Ramya is passionate about rethinking how we measure AI quality and how those measurements shape user confidence.